My response, after seeing it countless times, is simple: No.

The agent isn't the problem—the setup is. The model isn't failing; the architecture and the process are lacking rigor. We have been conditioned by the hype to treat autonomous AI systems like advanced chatbots. We build complex, multi-step pipelines connecting databases and APIs, but we operate under the illusion that all we need is a clever prompt.

We hand over an undefined scope and then wonder why the context window implodes in the eleventh step.

This problem is no longer about the sheer compute power of a model. It is fundamentally an expectations and a discipline problem. If you are building production-grade, autonomous systems, you must stop treating the LLM as a smart text generator and start treating it like the highly unpredictable, expensive, and brilliant piece of infrastructure that it is. Most of the failures you encounter—and likely the one waiting in your own codebase—stems from this single conceptual misunderstanding.

Failure Mode 1: You Bought a Racehorse and Asked It to Do Your Taxes

The most common architectural flaw is model mismatch. It’s a failure of specification, not of computation.

We are addicted to the concept of "all-in-one." We throw the biggest, most powerful, and most resource-intensive models (Opus-level engines) at every single problem, assuming brute force intelligence will solve any gap in our planning.

This isn't just wasteful; it's architecturally incorrect.

The critical insight is that specs beat vibes. Every time.

The problem is failing to define the precise cognitive requirement for the task. If the problem is well-defined—clear specs, acceptance criteria, and enumerated edge cases—you don't need the full horsepower of the most expensive model. A tiered approach is required:

- The Sprinter (Haiku/Sonnet): For well-defined, constrained tasks (e.g., data formatting, validation). You will spend more time in review, but you save significant compute budget, and you catch your own bad specifications faster.

- The Universalist (Opus): Reserved for the messy, tangled messes where the problem space is ill-defined, or where the complexity demands general reasoning to scope the entire solution.

If you attempt to use an over-engineered model for a trivial job, you incur unnecessary cost and complexity. If you use a trivial model on a massive, unstructured problem, you get garbage. The architect’s job is to match the required intelligence level to the task’s domain difficulty.

Failure Mode 2: You Skipped the Blueprint

The most dangerous developer habit is the default: "Prompt with 'build me a thing' and hope."

It feels fast. You give a high-level prompt—"Build me a recommendation engine"—and the model provides a promising skeleton. You, flush with the momentum of the initial prompt, begin coding.

This is the fastest path to the most brittle, unmaintainable, and scope-creeping garbage.

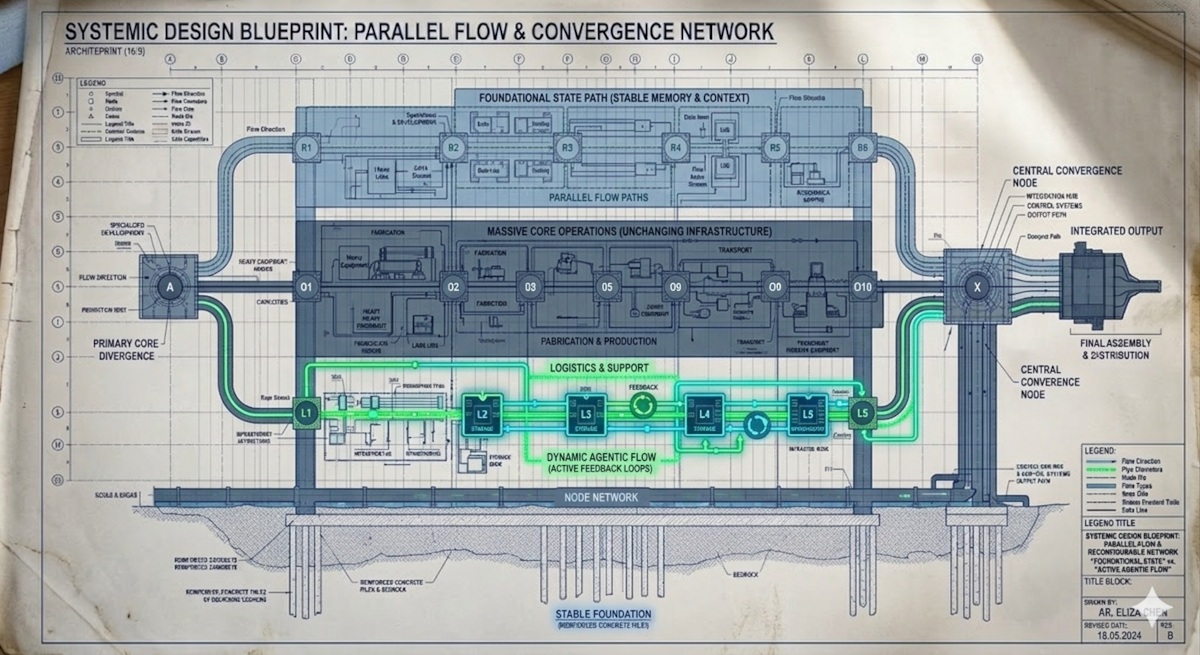

The fundamental prerequisite for any complex, autonomous system is a blueprint that exists outside of the chat interface. The process cannot start with the first line of code.

You must spend hours—many hours—in the planning phase. This is where the LLM acts as your highly paid, highly attentive rubber duck.

The technical process must generate more than just a functional goal; it requires:

- Meaningful Tech Stack: A clear rationale for every component.

- Desired Outcome: The ideal, step-by-step narrative.

- Acceptance Criteria: Binary, testable pass/fail checks.

- Test Scenarios: Positive, negative, edge, and error cases.

- Explicit Non-Goals: Critically, what are you not building? These non-goals are mandatory guardrails.

Without this rigorous pre-work, you do not get your thing. You get a thing—a vague, overgrown monster whose architecture is dictated by the model’s largest capabilities, rather than the actual constraints of the problem domain.

Failure Mode 3: You Have No Memory Strategy

The single biggest bottleneck in production-grade, autonomous agents is forgetting.

When you operate only within the confines of the chat, you operate in the illusion of the context window. In a real, multi-step production pipeline, relying on prompt history is a high-stakes gamble. The LLM’s memory is not a simple append-to-buffer function; it is a finite, expensive, and depletable resource.

We are forced to implement state management as a first-class citizen.

This is why platform advancements like the Memory Bank and Event Compaction are mission-critical. They allow the agent to store long-term learnings, ensuring that if it fails on Monday, it can recall the failure in its plan on Tuesday.

If your reasoning loop exceeds roughly 15 complex steps, or runs for more than 10 minutes without a formal state compaction, you are building a beautiful, complex memory leak. Stateful memory is not a feature; it is an infrastructure requirement.

Failure Mode 4: Your Prompts Are Legacy Code

The clearest architectural decay the industry is undergoing is the reliance on the conversational prompt. The era of merely "chatting with an LLM" is over.

The core shift must be: from prompts to protocols.

Relying on complex, monolithic prompts to manage tool calling, state, and decision-making is to build with brittle glue code. Modern systems must use standards like the Model Context Protocol (MCP). This protocol enables native, secure service integration, allowing your agent to "speak" directly to your internal services without you writing brittle wrappers.

Furthermore, this protocol shift mandates a change in documentation:

- The Single Source of Truth: Adopt the

AGENTS.mdphilosophy. When a rule is true everywhere—for the operator or the code—it belongs in one central file, which the agent references. Never scatter operating principles across disparate skills or chats. - Optimizing for the Agent: Finally, every instruction must be stripped of human-friendly flair. Instructions load into context and every word costs tokens. Strip ambiguity, remove all unnecessary narrative flow, and optimize the content for machine consumption only.

Failure Mode 5: You Didn't Define "Done"

The problem of "scope creep" is fundamentally a governance failure.

If you build an agent and merely tell it, "Analyze this data," it will happily not only analyze it but also write a comprehensive executive summary, build a dashboard, and send out three follow-up action items, even if those three action items are completely out of scope.

Acceptance criteria and explicit non-goals are the machine's tripwires. They tell the agent precisely when the task scope ends.

And critically, you must view the end of the pipeline not as a "review," but as a rigorous test suite. The agent's output must be subjected to unit, integration, and E2E testing. The acceptance criteria you defined during the blueprint phase must become the automated test cases.

The Setup Checklist: What Actually Works

If you are building any production-level agent, adopt this architectural discipline:

- Match Model to Task: Use tiered model selection. Treat the model like a specialized tool—a sprinter for simple tasks, a deep thinker for complex ones.

- Plan First, Code Last: Spend hours on defining the blueprint (the non-goals are non-negotiable).

- Stateful Memory: Externalize all long-term knowledge using dedicated memory infrastructure. Never rely on the prompt history for anything critical.

- Protocol-Native Tools: Use standardized protocols (MCP, ADK) to ensure true service interoperability and avoid writing middleware.

- Governance: Implement a system (Agent Gateways) to enforce blast radius limiting and granular read/write permissions.

- Test, Don't Review: Automate everything. Every successful step must be a code-tested passage, cross-checked across multiple model types to find blind spots.

The models are not failing you. Your process is. We have reached a maturity point where the tools are ready. The bottleneck has fully shifted from computational power to human discipline.

If your current setup lacks formalized specs, persistent memory, clear protocols, and a rigorous testing pipeline, then no model release will save you. You will merely have a faster, more expensive, highly sophisticated failure.

The future of autonomous AI is not in the model itself, but in the robust, structured container around it.

Build the structure first. Only then, and only then, should you worry about which model performs the task.

What is the biggest architectural or specification failure you’ve seen lately? Share your thoughts in the comments.

Dallum Brown

Writer and curator exploring the impact of technology on everyday life.

View All Articles