If a large language model (LLM) needs to function like an encyclopedia, today's models are phenomenal. If they need to function like a research team, they are only starting to wake up.

The critical breakthrough happening in the open-source space, spearheaded by competing Chinese labs like DeepSeek and Moonshot AI, is not about a marginally higher score on MMLU. It’s about context endurance, tool reliability, and the ability to operate without a human babysitter.

I have been running Kimi K2.6 locally since release, routing it through my own agent infrastructure. The difference between a model that answers questions and one that sustains a twelve-hour research thread is not incremental. It is categorical.

The industry's focus has fundamentally shifted. The question is no longer, "Which model is the smartest?" but rather, "Which architecture can sustain a complex goal across twenty disconnected steps?"

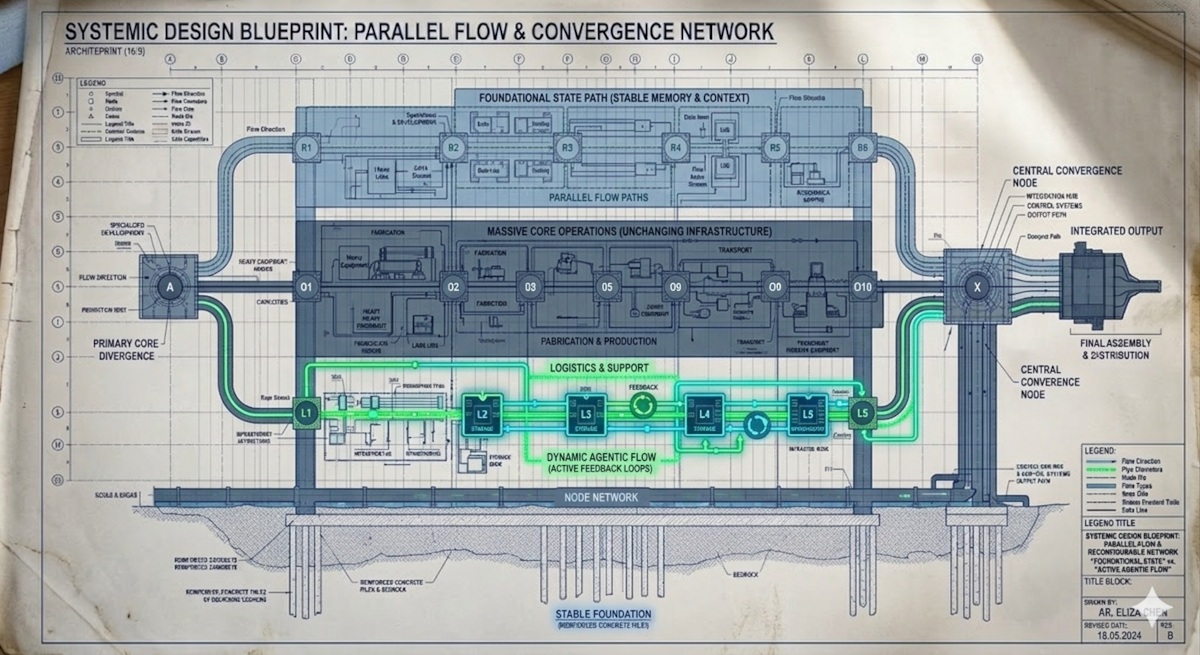

DeepSeek V4 and Kimi K2.6 are not just two model releases; they represent two radically different architectural bets on what the autonomous AI future will look like. Understanding this difference—infrastructure versus ecosystem—is more valuable right now than knowing the current leaderboard rankings.

The New Metric: From Intelligence to Autonomy

For years, model development was a performance arms race: higher parameters, bigger benchmarks, and fancier scores. This was an elegant yet ultimately limited race. It was limited because an LLM, no matter how smart, is inherently reactive—it answers what you ask.

The new generation of open-source models, however, are designed to be proactive. They are engineered for agentic workflows.

If your current AI setup requires you to prompt it, review its output, fix its mistakes, and then prompt it again—you are not using an agent. You are managing a very expensive, slow chatbot.

These two models recognize this limitation and are building systems, not just weights.

DeepSeek V4: The Infrastructure Play

DeepSeek's strategy is defined by efficiency, scale, and a relentless focus on stability. They aren't selling a magic brain; they are selling a platform that can be reliable at scale.

The V4 series—including the V4-Pro and V4-Flash variants—is an infrastructure powerhouse. Their key selling point is architectural efficiency, centered around techniques like DSA (DeepSeek Sparse Attention) and token-wise compression.

The core promise here is capacity. By making the 1 Million token context window the default, DeepSeek eliminates the concept of "premium context." It states that memory should not be a luxury, but the expected standard.

To understand why this matters in practice, consider a real workflow: dumping an entire 500,000-token codebase into context and asking the model to trace a bug across fifteen files, three frameworks, and a legacy migration. With a 128K window, you are doing surgery with a butter knife—chunking, summarizing, and praying the model remembers what you told it in step three. With a 1M window, the model holds the entire corpus in working memory. The difference is not convenience. It is capability.

Furthermore, the multi-modal setup, with its thinking/non-thinking dual modes and API compatibility (matching OpenAI and Anthropic formats), sends a clear signal: DeepSeek is building a system designed to be plugged into every existing stack.

Their message is blunt: We are creating the stable foundation you can migrate to. The impending hard cutover date for their older chat and reasoning models isn't just a deprecation notice; it's a declaration that the new V4 architecture is the only stable way forward.

Kimi K2.6: The Ecosystem Play

If DeepSeek is building a highly efficient highway, Kimi is building a specialized, multi-department workforce.

While initially positioned as an open-source coding specialist, the true story of Kimi K2.6 is the agent swarm.

Kimi is moving beyond simple code completion. Their design is explicitly code-driven, enabling full-stack app development with authentication, database integration, and visual understanding. The breakthrough isn't in the model's intelligence alone, but in the framework that allows multiple, heterogeneous agents to coordinate in parallel.

In practice, this looks less like "one smart assistant" and more like a team of three to five specialized workers: a research agent that gathers requirements and context, a coding agent that implements features, a review agent that catches errors and suggests refactors, and a synthesis agent that assembles the final output. Each operates with its own state, its own tools, and its own memory—coordinated by a central orchestrator rather than a single monolithic prompt.

We are seeing the implementation of proactive agents via frameworks that allow extended, semi-autonomous operation. In our own workflow, migrating to Hermes Agent and running Kimi K2.6 as the underlying model has meant agents that can continue research, iterate on drafts, and generate solutions across multi-hour sessions without continuous micro-management. The model does not just answer; it persists.

The core promise here is collaboration. Kimi isn't selling the model weights; they are selling the process—a coordinated, adaptive, and scalable team of specialized AI workers.

What This Means for Developers (The Synthesis)

The benchmark arms race is entering a state of equilibrium. Nobody is suddenly going to declare a winner based on a single MMLU score. The market, and the expert developer, is finally prioritizing three architectural components:

- Context Endurance: Does the system lose coherence after 10 steps? (DeepSeek's focus.)

This is the silent killer of most agent deployments. You build a beautiful pipeline, test it on a three-step task, and it works perfectly. Then you hand it a real project—twenty steps, four context switches, two external API failures—and by step fourteen the model has forgotten its own instructions. DeepSeek's bet is that sheer context capacity solves this by giving the model enough room to maintain its own state.

- Tool Reliability: Does the agent reliably interact with external APIs and databases? (Kimi's focus.)

An agent that calls tools inconsistently is worse than useless—it is destructive. A model that writes a file, then tries to read it back using the wrong path, then hallucinates a fix, then corrupts your working directory: this is the reality of most "agent" demos. Kimi's swarm approach isolates tool use to specialist agents, reducing the blast radius when one component hallucinates.

- Multi-Step Autonomy: Can the system take a vague, high-level goal and decompose it into a full project plan?

This is the holy grail. "Build me a login system" is not a prompt. It is a project brief. The difference between a chatbot and an agent is whether the system can break that brief into requirements, research, implementation, testing, and documentation—then execute each phase without you holding its hand.

When an AI setup fails in the real world, it rarely fails because the underlying model is "dumb." It fails because the system lost the plot, the thread, or the scope after step 12. Both DeepSeek and Kimi are explicitly optimizing for this continuity.

A Comparative Look: Bet, Infrastructure, and Risk

| Feature | DeepSeek V4 (Infrastructure Bet) | Kimi K2.6 (Ecosystem Bet) |

|---|---|---|

| Core Philosophy | Stability, Efficiency, Universal API Compatibility | Orchestration, Integration, Dedicated Workforce |

| Key Strength | Default 1M context; robust, measurable efficiency gains. | Swarms; ability to execute multi-agent, full-stack goals. |

| Best For | Projects needing vast context retention (e.g., analyzing entire codebases or legal briefs). | Complex dev loops and iterative, research-heavy product builds. |

| Architectural Risk | If the theoretical efficiency gains can't be matched in practice, adoption slows. | Risk of ecosystem lock-in if the "swarm" concept becomes proprietary or overly complex. |

What to Watch For (The Critical Lens)

A simple comparison of features is insufficient. The real litmus test for these architectures lies in their weakest points and their real-world performance under stress. I recommend watching for these three things:

-

Context Coherence vs. Capacity: Can DeepSeek's massive 1M context truly hold coherence, or does the complexity degrade the quality of the reasoning toward the edges? Capacity is easy; deep, sustained coherence is hard.

-

Swarm Composition vs. Parallel Running: Do Kimi's agents truly compose a solution (i.e., agent A feeds a refined output into agent B for refinement), or do they just run parallel processes and hope for the best? Composition requires true state management.

-

The Open-Source Leadership Shift: The reality is that the labs still publishing fully open weights are predominantly Chinese. Western leaders like OpenAI, Anthropic and Meta have gone closed. Meta released models but now keeps their best work internal. Google's Gemma 4 line is a commendable exception. The question is no longer "which country builds the best model?" but rather, "who is actually carrying the open-source torch?" DeepSeek and Kimi are doing the work that Western labs have abandoned. Which architecture gets adopted by the global community now that the center of open-source gravity has shifted?

A Paradigm Shift

This is not a "which one wins" post. It is a "the game changed" post.

The open-source frontier has finally transcended the need to merely imitate the closed-source giants on a leaderboard. The race is no longer about brute-force brilliance; it is about systemic reliability.

DeepSeek and Kimi are both deeply smart, but they see the future from two different vantage points. DeepSeek is building the bedrock—the durable, measurable, global infrastructure. Kimi is building the dynamic engine—the coordinated, specialized workforce.

Your move: Stop evaluating the model based on a single-turn quality query. Instead, test it by giving it a complex project goal and walking away for 24 hours.

Build a system that operates independently for long periods of time. That is the frontier.

Dallum Brown

Writer and curator exploring the impact of technology on everyday life.

View All Articles