Developed by Google, Gemma 4 is not merely an iteration—it is a foundational leap forward in open-weights model architecture. Built upon the same foundational research and technology that powered Gemini 3, Gemma 4 addresses the core limitations of today’s AI landscape.

For developers and enterprises, the main appeal of Gemma 4 lies in its unique combination of state-of-the-art intelligence with an unwavering commitment to transparency and accessibility. All of this is guaranteed through a commercially permissive Apache 2.0 license, setting a new standard for the open model space.

Core Identity: Intelligence Meets Trust

At its heart, Gemma 4 is designed to transcend traditional chat interfaces. It is engineered to tackle complex reasoning, multi-step planning, and mission-critical enterprise workloads, making it Google's most intelligent offering in the open model ecosystem.

- Trust and Sovereignty: The model provides a trusted and transparent foundation, which is crucial for sovereign organizations and large enterprises that cannot compromise on maintaining complete control over their AI infrastructure.

- Architectural Revolution: This isn't just an update; it's a full architectural rethinking, purpose-built for adaptability and scale.

- Flexible Scale: The model portfolio offers diverse parameter sizes (E2B, E4B, 26B, 31B). This variety allows developers to select the perfect balance between required computational resources and maximum performance—whether running on a mobile chip or a vast cloud cluster.

Expanding AI Boundaries: Multimodality and Context

A major differentiator for Gemma 4 is its advanced ability to understand and reason across multiple data types, combined with an unmatched capacity for memory.

Multimodal Reasoning: Seeing and Hearing the World

Gemma 4's native capabilities extend far beyond simple text generation. It processes sophisticated inputs, allowing for highly integrated applications:

- Text: Standard, sophisticated language comprehension and generation.

- Vision: The model supports dynamic vision token budgets, enabling detailed and accurate analysis of images.

- Audio & Video: True multimodal capabilities are supported across text, image, audio, and video inputs, making it an all-encompassing reasoning engine.

Vast Memory, Superior Coherence

The contextual capacity of Gemma 4 is notably robust, boasting context windows up to 256K tokens in its medium-sized variants. This vast memory grants the model the ability to:

- Maintain perfect coherence over extremely long interactions.

- Process and analyze complex documents (entire books, multi-hour meeting transcripts) in a single, cohesive context.

- Deliver best-in-class reasoning, making it instrumental for sophisticated, multi-step coding and data analysis tasks.

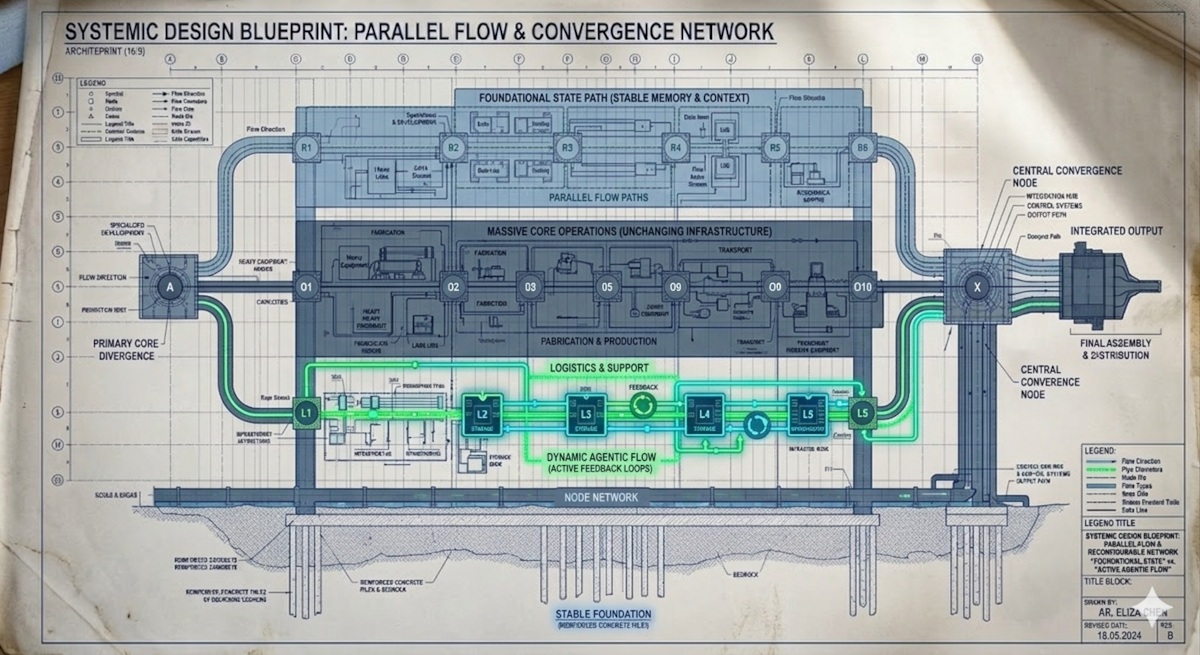

The Engine Room: Efficiency and Architectural Power

The ability of Gemma 4 to achieve such high intelligence and massive context is rooted in advanced architectural techniques that maximize intelligence per computational parameter.

Mixture of Experts (MoE) Efficiency

For advanced users, the utilization of a Mixture of Experts (MoE) architecture is a game-changer. Some versions, such as the 26B MoE model, leverage this structure:

- How it Works: While the model’s total parameter count may reach 26 billion, the inference process only activates a smaller, specialized subset (e.g., 4 billion parameters) for any given task.

- The Benefit: This drastic optimization significantly enhances efficiency, allowing for high intelligence to operate at a fraction of the computational cost.

Tailored Scaling for Every Device

Gemma 4 understands that "one size fits all" does not work for AI.

- Dense vs. MoE: Power users can choose between the sheer scale of an MoE variant or the predictable efficiency of a dense model (like the 31B variant) based on specific application needs.

- Edge Optimization: Crucially, smaller variants are specifically optimized for efficient, native execution on local devices, ensuring that powerful AI is accessible even on laptops and mobile hardware.

The Game Changer: TurboQuant and the Memory Bottleneck

If the capability of Gemma 4 is the massive brain, TurboQuant is the revolutionary power supply that allows it to run anywhere.

The Problem: The KV Cache Bottleneck

Understanding why Gemma 4 is so efficient requires understanding the single biggest limiting factor in modern LLM deployment: the Key-Value (KV) Cache.

How it Works: During LLM inference (generating text token by token), the model must store all previously generated information—the Key (K) and Value (V) pairs. This cache is essential because it prevents the model from having to recalculate everything every time a new token is generated.

The Core Issue: While this caching technique is an optimization, it has a physical limitation: it grows linearly with context length. Every extra token—whether from a massive prompt or an extended conversation—requires more VRAM. This constant, linear accumulation eventually exhausts the available GPU memory, leading to an operational halt or severe slowdown. This memory saturation is the primary constraint preventing large context models from running on consumer hardware.

The Solution: TurboQuant Compression

Google Research has solved this bottleneck with TurboQuant, a specialized compression algorithm.

TurboQuant operates by drastically reducing the precision of the stored KV cache data without requiring any model retraining or fine-tuning.

- Extreme Compression: TurboQuant can quantize this critical cache down to as low as 3 bits per element.

- Massive Efficiency Gains: This compression results in phenomenal memory savings, capable of cutting the KV cache memory usage by up to 6 times.

TurboQuant Integration: Performance Redefined

The application of TurboQuant is not theoretical; it is a quantifiable breakthrough:

- Benchmark Victory: Benchmarking has successfully shown Gemma 4 using 3-bit KV cache compression to handle extreme contexts (e.g., 262K tokens) while running entirely on a single consumer GPU, keeping memory usage safely within VRAM limits.

- Speed and Density: This quantization enables significant decoding speedups, allowing for sustained high throughput (reported rates like 120 t/s) with zero overhead in memory usage, even when processing huge documents.

In short: TurboQuant liberates the power of massive context models, making them viable for accessible, consumer-grade hardware.

Deployment Freedom: From Cloud Center to Edge Device

Gemma 4 is built for maximum deployment flexibility, supporting seamless use cases from massive cloud infrastructure down to the smallest edge device.

Enterprise and Cloud Integration (Maximum Power)

For regulated, large-scale environments, Google Cloud offers specialized tools that pair Gemma 4's multi-step planning abilities with unmatched security. Developers can utilize the GKE Agent Sandbox, allowing the safe execution of code generated by the LLM within highly isolated, Kubernetes-native environments. This setup delivers rapid cold starts and high throughput required for mission-critical workloads.

On-Device and Local Execution (Maximum Privacy)

One of the model's biggest selling points is its ability to run completely locally. This provides superior performance and enhanced privacy.

- Local First: Smaller, optimized models are engineered for efficient operation on mobile and edge devices.

- Privacy Guarantee: For applications handling sensitive data, running the model locally means data never leaves the premises, ensuring unmatched security.

- Rapid Prototyping: Developers can utilize accessible platforms like Ollama or LM Studio to run models instantly on personal machines.

The Total Advantage

The combination of Gemma 4's sophisticated architecture and TurboQuant's revolutionary compression technology delivers three major benefits for developers:

- Unrivaled Accessibility: By dramatically lowering the memory footprint, Gemma 4 makes running powerful AI agents viable on local, consumer-grade hardware, removing the reliance on massive data center clusters.

- Performance Under Pressure: The ability to sustain high throughput even with extremely large contexts (e.g., 256K tokens) is critical for deep document analysis and long-term conversational AI.

- Zero-Friction Deployment: TurboQuant delivers massive memory and speed improvements without requiring any additional complex fine-tuning or retraining, enabling rapid prototyping and deployment time.

Gemma 4 is not just another open model—it is the comprehensive solution for scaling intelligence, maintaining privacy, and conquering the memory bottleneck of modern AI.

What do you think? Are you using Gemma 4 locally or in production? Let us know in the comments below.

Dallum Brown

Writer and curator exploring the impact of technology on everyday life.

View All Articles